Ob Umwelt- und Naturwissenschaften, Physik, Medizin, Ingenieurswesen oder Archäologie und Kunst: Sie alle brauchen für Simulationen, Berechnungen und Visualisierungen viel Rechenkraft und daher leistungsfähige Computer. Tauchen Sie mit unseren Berichten ein in die Welt von Wissenschaft und Forschung. Entdecken Sie neue Galaxien, das Innerste des Menschen, die Folgen von Klimawandel, historische Säle und Bauten, faszinierende Kunstwerke und mehr. Oder lesen Sie von neuesten Errungenschaften in der IT.

Beim diesjährigen Rocketeer Festival in Augsburg wurde am Donnerstagabend der Bayerische Digitalpreis B.DiGiTAL 2026…

Mit der GENE-Code-Familie lassen sich physikalische Vorgänge in Kernfusionsanlagen auf Supercomputern modellieren. Um…

Für verschiedene Projekte entwickelt das LRZ Werkzeuge für das Management von Forschungsdaten: Eine Aufgabe, von der die…

In den vergangenen vier Jahren hat das europäische Openwebsearch.EU-Projekt mit dem offenen Webindex OWI die Grundlagen…

Das LRZ experimentierte mit photonischen Prozessoren von Q.ANT im Alltagsbetrieb und bescheinigt der innovativen…

Im Doppelpack schneller und besser: Das University College London trainierte für Simulationen der Strömungsdynamik…

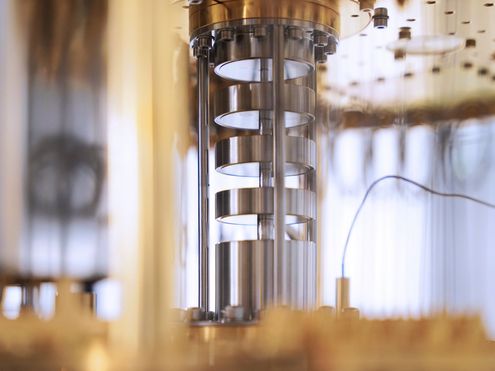

Die Verbindung von Quantencomputing und GPU-beschleunigtem Supercomputing ermöglicht neue Ansätze in der…

Das Team Future Computing evaluiert die zweite Generation photonischer Prozessoren von Q.ANT unter realen…

Euro-Q-Exa nimmt gerade seinen Betrieb für die Forschung auf: Die Physikerin Jeanette Lorenz hat schon Rechenzeit…