Introduction to hybrid programming in HPC

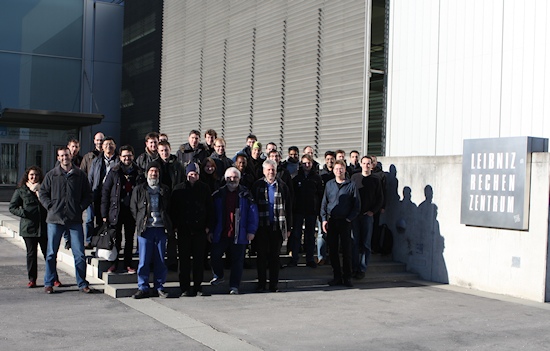

PRACE PATC Course: Introduction to hybrid programming in HPC @ LRZ, 14.1.2016

Most HPC systems are clusters of shared memory nodes. Such SMP nodes can be small multi-core CPUs up to large many-core CPUs. Parallel programming may combine the distributed memory parallelization on the node interconnect (e.g., with MPI) with the shared memory parallelization inside of each node (e.g., with OpenMP or MPI-3.0 shared memory). This course analyzes the strengths and weaknesses of several parallel programming models on clusters of SMP nodes. Multi-socket-multi-core systems in highly parallel environments are given special consideration. MPI-3.0 has introduced a new shared memory programming interface, which can be combined with inter-node MPI communication. It can be used for direct neighbor accesses similar to OpenMP or for direct halo copies, and enables new hybrid programming models. These models are compared with various hybrid MPI+OpenMP approaches and pure MPI. Numerous case studies and micro-benchmarks demonstrate the performance-related aspects of hybrid programming.

Tools for hybrid programming such as thread/process placement support and performance analysis are presented in a "how-to" section. Hands-on exercises give attendees the opportunity to try the new MPI shared memory interface and explore some pitfalls of hybrid MPI+OpenMP programming. This course provides scientific training in Computational Science, and in addition, the scientific exchange of the participants among themselves.

The course is a PRACE Advanced Training Center event.

Location

Leibniz-Rechenzentrum

der Bayerischen Akademie der Wissenschaften (LRZ)

Boltzmannstr. 1

D-85748 Garching bei Muenchen

Course room II, H.U.010

How to get to the LRZ: see http://www.lrz.de/wir/kontakt/weg_en/

Lecturers

|

Dr. Georg Hager (RRZE) |

|

Dr. Rolf Rabenseifner (HLRS) |

Schedule

10:00 Welcome

10:05 Motivation

10:15 Introduction

10:45 Programming Models: Pure MPI

11:15 Coffee Break

11:35 MPI + MPI-3.0 Shared Memory (Talk + 2 Practicals)

13:10 Lunch

14:10 MPI + OpenMP

15:10 Coffee Break

15:30 MPI + OpenMP continued (Talk + Practical)

16:00 MPI + Accelerators

16:15 Tools

16:25 Conclusions

16:45 Q&A

17:00 End

Organisation & Contact

Dr. Volker Weinberg (LRZ)

Registration

Via the PATC page.

Slides

MPI+X - Hybrid Programming on Modern Compute Clusters with Multicore Processors and Accelerators

Final Remarks (LRZ)

Exercises

The exercises about the MPI shared memory can be found in MPI.tar.gz as described in

https://fs.hlrs.de/projects/par/par_prog_ws/practical/README.html

i.e., in the file

https://fs.hlrs.de/projects/par/par_prog_ws/practical/MPI.tar.gz

and there in the subdirectories MPI/course/*/1sided with

* = C for the C intterface,

* = F_20 for the old Fortran mpi module, and

* = F_30 for the new Fortran mpi_f08 module.

The 2nd exercise block about hybrid MPI+OpenMP can be found in

https://fs.hlrs.de/projects/par/par_prog_ws/practical/2016-HY-G-GeorgHager-Jacobi-w-MPI+OpenMP.tgz

with pure MPI code in the C and Fortran directories and the hybrid MPI+OpenMP Version in the solution sub-directories.

Evaluation

Please fill out after the course:

https://events.prace-ri.eu/event/450/evaluation/

Further courses and workshops @ LRZ

LRZ is part of the Gauss Centre for Supercomputing (GCS), which is one of the six PRACE Advanced Training Centres (PATCs) that started in 2012.

Information on further HPC courses:

- by LRZ: http://www.lrz.de/services/compute/courses/

- by the Gauss Centre of Supercomputing (GCS): http://www.gauss-centre.eu/training

- by the PRACE Advanced Training Centres (PATCs): http://www.training.prace-ri.eu/